From Duterte Troll Army to AI Troll Farm: The System Now Running Your Feed

In 2016, Duterte’s troll army still looked human: call center kids, OFWs, volunteers flooding Facebook with the same lines. In 2026, the troll farm is hardware, AI, and a few operators running thousands of “people” from a rack of phones. This piece walks through how that system works, how Filipinos helped build it, and why even the original trolls are now being replaced by the machine they trained.

14 min read

Duterte’s people were the first ones who made me feel it.

Not just the violence on the news, not just the presscons. It was the feeling of being surrounded online by my own countrymen, cheering the killings, mocking the dead, attacking anyone who asked too many questions. And somewhere behind that wave was a man named Nic Gabunada, stitching together pages and groups and “volunteers” into a network that turned Facebook into a weapon.

Ten years later, in 2026, I’m still in the same feeds, still in the same arguments.

But I’m no longer sure I’m arguing with people.

THE QUESTION THAT WON’T LEAVE ME

For a long time, the anger was simple.

Filipinos were being paid to lie for Duterte, then for Marcos, then for whoever needed noise. They harassed journalists. They red‑tagged activists. They swarmed anyone who posted the “wrong” thing about China or the ICC. It felt clear: these are traitors, Filipinos who sold the country cheap for a monthly retainer and some crumbs of access.

And this wasn’t just random “fans” getting carried away. During the 2016 campaign, Gabunada’s operation assigned coordinators to different regions and to OFWs, ran on a “message of the week,” and used hundreds of paid keyboard workers plus influencers to flood Facebook with the same lines from different mouths. When a journalist asked a hard question, you could watch the choreography: fake and real pro‑Duterte accounts swarming their page with death threats and rape jokes, amplified inside groups that had been primed for that week’s target. The “social media army” Duterte later admitted hiring wasn’t an accident; it was a structure.

But as I kept reading through investigations, technical reports, and even hardware catalogs, the picture began to change.

The troll isn’t just a person at a keyboard anymore. It’s a job description, a rack of phones, a rented proxy, a prompt sent into an AI model. It’s a whole machine where some Filipinos are operators, some are customers, and some are just raw material.

So the question that won’t leave my mind is this: in 2026, when I walk into a toxic thread, am I still dealing with traitors—or am I shouting into a room full of devices and stolen faces that only look Filipino from the outside?

HOW DUTERTE AND NIC GABUNADA NORMALIZED THE TROLL INDUSTRY

If you want to understand the machine, you have to start when it still ran mostly on people.

In 2016, Duterte didn’t just have fans. He had an online strategy. With a budget of $200,000, his camp treated Facebook as the main battlefront, not just an add‑on. Supporters coordinated inside private groups. Pages pushed “message of the week” talking points. Overseas Filipinos amplified every clip and meme.

At the center of that social media strategy was Nic Gabunada, an ad man and PR operator who helped build what was later described as an “amazing social media network”: dozens of pages, fake accounts, and clusters of groups that boosted Duterte and allied candidates. In 2019, Facebook took down around 200 accounts, pages, and groups it linked to him for “coordinated inauthentic behavior,” saying they used fake identities to manage pages and comment in groups.

That takedown was a glimpse behind the curtain: troll armies were not random; they were organized networks with structures, budgets, and leadership.

From there, troll work stopped being a scandal and became a line item in the budget of a political campaign. PR firms and political operatives folded “social media operations” into campaign packages. The idea that you could pay Filipinos to shape the feed—24/7, at scale—was now normal.

THE FILIPINO TROLL: TRAITOR, WORKER, OR BOTH?

When I first tried to make sense of this, the word that came easiest was betrayal.

Betrayal by the person who laughs along with “nanlaban” jokes. By the one who calls human rights lawyers “NPA coddlers” on command. By the accounts that swarm an activist’s page the moment she posts about a body count or a land dispute.

But the more you look at the labor side, the messier it gets.

Reports and testimonies over the years show different pay brackets. Some trolls got paid per post or per shift, others had monthly deals. Some stories put troll farm earnings in the 30,000 to 70,000 peso range for certain roles. The LA Times once described troll farm work as a “growth industry,” with young Filipinos earning around 10,000 pesos a month or more for basic engagement work, higher for coordinators. Many were students, underemployed graduates, or people between jobs, recruited through classmates, campaign staff, Facebook groups, and word of mouth.

You still made a choice when you signed up. You chose to be the person who tells a grieving mother “buti nga.” You chose to flood the thread when someone posts about corruption or Chinese ships in our waters. But that choice sat on top of low wages, unstable work, and a political culture where loyalty to a patron often pays better than loyalty to the truth.

So is that person a traitor, a worker, or some uncomfortable mix of both.

That’s the tension I still find myself in.

DIFFERENT FILIPINO ROLES INSIDE THE MACHINE

“Troll” makes it sound flat. In reality, Filipinos occupy very different slots in this system.

There are the architects: the Gabunada types, campaign strategists, PR consultants who design the narrative, choose the enemies, coordinate spend, and talk directly to politicians or foreign clients. They decide what line to push about the ICC, about China, about the opposition, about Western allies.

There are the managers: the ones who run operations day‑to‑day. They recruit workers, write simple scripts (“comment this under every post about X”), manage spreadsheets of accounts, and send reports showing “reach” and “sentiment” to clients. They are the middle layer between money and manpower.

There are the rank‑and‑file: the people sitting in small offices or at home, running multiple accounts, copy‑pasting comments, reacting, mass reporting, joining group chats with talking points. They’re the ones who get told to trend a hashtag or drown a journalist’s post under insults.

And then there are the unwilling: Filipinos whose names, faces, and IDs get harvested in data breaches or sold in dark‑web dumps, then turned into “verified” accounts using AI‑composited selfies and look‑alike hunting tools. They wake up one day to find a stranger using their face to post about issues they never touched.

The architects aren’t just telling people what to say anymore. They’re feeding lines and examples into AI so the fake voices sound exactly like us—Taglish, Bisaya jokes, even the way titos argue about geopolitics.

When I say “traitor,” I’m not talking about all four groups the same way.

It mean it most for the architects and managers who know exactly what they’re doing and who they’re serving. Iba yung assessment ko when it comes to some kid paid per comment, and differently again on someone whose passport scan became a fake account that screams at strangers in their name.

FROM FACEBOOK ARMIES TO PHONE FARMS

That first Duterte wave was still mostly human. By 2026, the hardware has caught up.

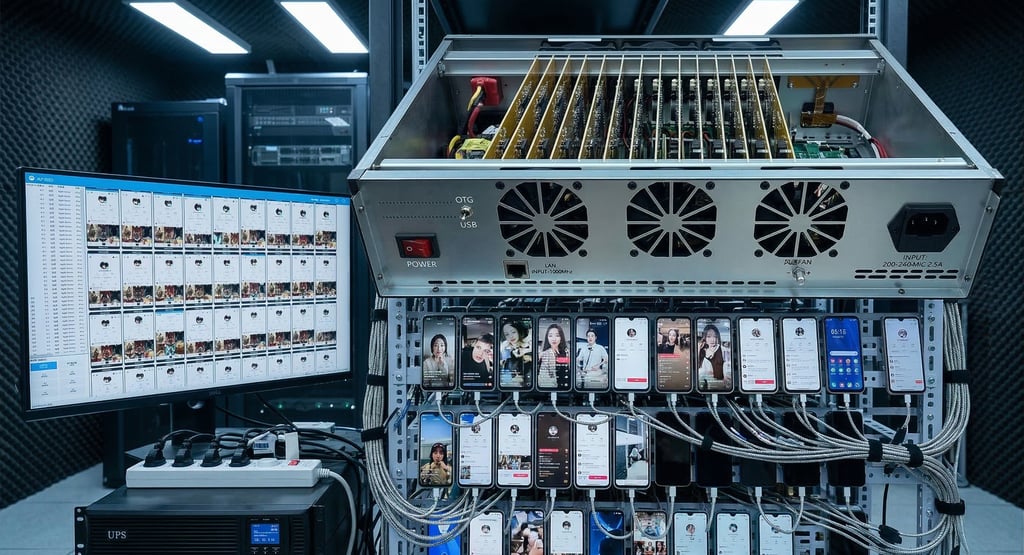

Investigations and industry reports describe phone and SIM farms operating in China, Vietnam, Eastern Europe, even inside the US. Instead of rows of people with one phone each, you now have metal boxes—chassis sourced from electronics hubs like Guangdong—holding 20, 40, 60 stripped‑down smartphone motherboards.

No screens. No batteries. Just the brain of each phone slotted into a frame, connected to a central power supply so nothing swells or explodes, and cooled by fans or even liquid systems so they can run non‑stop. One rack can hold hundreds of “phones” that never see human hands after installation.

In the next room, one or two operators sit at PCs.

They see dashboards where each board shows up as a device. They run software that mirrors every tap and swipe from a “master” phone onto dozens or hundreds of others. They push an update to all devices at once. They can open the same app on 500 phones and have them behave slightly differently.

In 2016, you needed a room full of people to generate that kind of traffic.

In 2026, you can get similar volume from a handful of operators and a wall of motherboards.

MASKS, BEHAVIOR, AND FAKE IDENTITIES

If that was all—just more phones and fewer people—platforms could probably adapt. The real problem is the masks, the tricks that hide where the traffic really comes from and who is behind it.

The first mask is location. In 2026, it’s easy to rent internet connections that look like they belong to real homes and real phone users. For a few dollars, a farm can tap into millions of “residential” and “mobile” lines around the world. Some services even let you keep the same “home” for hours, or pretend you’re on a specific telco, just by clicking a menu.

For us, that means one small office can pretend to be thousands of ordinary people spread across different cities. For platforms, blocking those lines is risky, because they might also hit real families who happen to share the same provider.

The second mask is the device itself. Anti‑detect browsers and fingerprint spoofing tools help bots copy the tiny technical details of real phones and browsers: fonts, graphics settings, TLS handshakes, even how the browser says hello to a website. On paper, the fake traffic looks like a normal mix of Androids and iPhones on Wi‑Fi and LTE.

The third mask is behavior.

Security teams have started leaning on “behavioral biometrics”—the way you type, scroll, pause, and move your phone—as proof that you’re human. In response, farms now generate synthetic behavior: automation scripts that move the cursor along messy, curved paths; random delays before clicks; scrolling that stops and starts like a bored commuter.

Some systems even broadcast fake sensor data directly into the phone’s operating system—accelerometer and gyroscope readings that look like someone walking with the device in their pocket, turning it, putting it down on a table. On the platform’s side, it feels like a living hand is holding the phone, even if the board is screwed onto a rack in a windowless room.

And then there’s identity.

KYC (Know Your Customer) checks used to be the last line of defense: selfies with ID, liveness tests, video proofs. Now fraudsters use AI to search for faces that resemble stolen ID photos, then composite “selfies with ID” convincing enough to pass automated checks. They don’t just steal your ID; they use “look‑alike hunting” bots to find a Filipino face on Instagram or Facebook that matches a stolen passport, then use AI to glue them together into a verified persona. Some systems even inject prerecorded or generated video straight into the data stream, bypassing the physical camera.

So you get to a comment thread on an election story and see a “verified” Filipino account with a real‑looking face defending a foreign line.

You don’t know if you’re dealing with:

a Filipino who knowingly took the job

a synthetic persona built from scratch

or a victim whose face and ID were stolen and puppeted by someone else

The anger doesn’t disappear, but it changes shape.

THE PRICE OF BUYING A CROWD IN 2026

If all of this sounds expensive, it isn’t.

The going rates in 2026 look something like this:

Around 17 dollars for 1,000 followers, usually powered by those dense phone farms.

About 5 dollars for 1,000 generic comments—cheap, copy‑paste noise.

Up to 80 dollars for 1,000 comments that sound like real people from a specific country, often written or guided by AI.

Less than 1.50 dollars to join a bullying campaign in some places, where local trolls get paid cents to attack a target.

And on the labor side, maybe 740 to 2,480 US dollars a month for a more skilled operator in countries like the Philippines.

For the price of one mid‑level salary, a client can now buy followers, comments, harassment, and a semi‑automated AI army that never sleeps.

For a politician, a corporation, or a foreign state actor, a synthetic crowd is often cheaper and more reliable than a full human troll team.

The “value” of a Filipino traitor is dropping.

THE SLOW COLLAPSE OF TROLL JOBS (AND THE JOBS THAT REMAIN)

So where does that leave the people who used to sit in front of the screens?

On the content side, generative AI models now handle a lot of the writing. They can produce posts, comments, and replies in Filipino English, Tagalog, Bisaya, or some mix of all three. They can be tuned to sound like a tita from Cavite, a call center agent in BGC, or an OFW in Dubai.

On the logistics side, orchestration tools and phone farms schedule, post, like, and reply at scale. One operator can spin up a hundred personas, each with its own timeline of activity, its own “sleeping hours,” its own mix of political posts and random memes.

The result is a slow collapse in demand for low‑level troll labor.

There are still human jobs, but fewer. The ones that remain are more specialized:

People who feed the AI the right prompts and examples so it picks up local slang, political in‑jokes, and the correct tone for each persona. The human trolls left are no longer just typists; they’re the ones giving the machine the exact cadence of a BPO worker or a frustrated tita so the AI’s voice feels authentic.

People who monitor dashboards, adjust when platforms start flagging accounts, and tweak proxy or fingerprint settings.

People who design the overall narrative arc: when to push outrage, when to pivot to distraction, when to go quiet.

The mass of workers who used to earn 10,000–30,000 pesos tagging critics and spamming news pages in 2016 now face a shrinking market in 2026. Some try to pivot to “legit” content work and discover that AI is also flooding that space. Others get stuck with a reputation that makes it harder to find other jobs, while the system they helped feed continues without them.

Ganyan kabilis palang palitan ang tao.

What makes it worse is this: the algorithms that are replacing them learned from their own work. The memes they made, the insults they repeated, the lies they boosted from 2016 onwards all became patterns for newer AI‑driven operations to copy and refine.

They betrayed the country for jobs that were always designed to be temporary—and now the machine is out‑trolling them, using the language they taught it.

ARE THEY STILL TRAITORS WHEN THE MACHINE TAKES OVER?

I still feel the old anger when I think of those early Duterte years.

Gabunada’s networks didn’t just sell a candidate; they helped create an atmosphere where killings were cheered and critics were hounded. Troll armies defended policies that broke institutions and lives. People suffered while other Filipinos collected their pay and typed lol.

That’s not something I can wave away as “just work.”

And it didn’t stop in 2016. In 2025, the Senate hauled in a Makati PR firm called InfinitUs after evidence surfaced that it had been paid by the Chinese embassy to provide online “services” that allegedly included attacks on Philippine officials and pro‑Beijing messaging. Senators openly floated treason and espionage charges, saying operations like that “undermine the sovereignty of our country.”

The company denied running disinformation campaigns, framing the payment as for an awards event and related services. The investigations are still ongoing. But for me, that hearing put a concrete name and address on the fear: that Filipino‑registered entities can become paid instruments of a foreign power in our own information space.

That’s the highest form of betrayal I can think of—selling not just your own conscience, but your country’s voice.

At the same time, the moral map in 2026 is more complicated than “all trolls are the same.”

Some Filipinos still sit at the top of these operations. They negotiate contracts. They coordinate with local or foreign clients who want pro‑China narratives pushed in Filipino spaces, or who want Western politics influenced from a Makati office. They know exactly what they’re doing.

Others are stuck in the middle: semi‑anonymous “social media specialists” inside agencies who don’t see the full picture, only their small piece of the workflow.

And some never chose any of this: their leaked IDs, stolen selfies, or scraped photos end up in AI systems that clone their face into accounts they will never log into.

If I call them all traitors, I flatten the story.

If I stop using the word at all, I let too many people off the hook.

So this is how I see it: there are Filipinos who knowingly took money to help hurt other Filipinos, and I still call that betrayal. There are others who became cogs in a machine they didn’t fully understand and are now being pushed out by the same AI they helped train. And there are those who were turned into raw material against their will.

The machine doesn’t care which is which. But we have to.

THE SYSTEM THAT OUTLIVES THEM—AND WHAT WE DO WITH IT

The hardest part to accept is that even if every Filipino troll resigned tomorrow, the system would keep going.

The phone farms would still be humming in Guangdong, Hanoi, New York, or maybe some secret location here in the Philippines. The AI personas would still be ready to spin up a thousand fake “kababayans” arguing in English, Tagalog, or Taglish about our elections, our alliances, our history. The proxy networks and fingerprinting tools would still be hiding their tracks.

Defenders are trying new tactics.

Platforms do takedowns of fake networks, including those linked to Philippine politics. Regulators like the EU are rolling out AI rules that ban certain kinds of manipulative systems and force companies to show how they manage risk.

Meta likes to say it has this under control. In March 2026 it joined a “Joint Disruption Week” with the FBI and Royal Thai Police, helping disable over a hundred thousand accounts tied to Southeast Asian scam and troll centers. It talks about AI “Integrity Systems,” a Llama‑based firewall, advertiser verification, and a promise that by the end of the year most ad money will come from verified buyers instead of shadowy clients.

On paper, Meta even claims that, working with our own DICT, it can take down election‑related disinformation within an hour of a government report. But our IT secretary, Henry Aguda, has already called them out for something more basic: the machine still doesn’t understand us. It misses Bisaya and Taglish, it misreads our sarcasm, it lets local venom pass through filters designed in Menlo Park while sometimes flagging organic posts from real people.

And the “one hour” promise doesn’t always show up on our screens. In a February 2026 hearing, Senator Raffy Tulfo had to berate Meta officials because a deepfake of President Marcos stayed up for more than a week despite being reported through official channels. Meta’s answer was the same line we’ve heard for years: they were still “processing it.”

Here at home, the Senate is finally saying the quiet part out loud. In late 2025 and early 2026, hearings on fake news and troll farms turned into debates about whether government should ever hire “troll armies” at all. Senator Robinhood Padilla filed Senate Bill No. 1490, the Anti‑Troll Farm Act, which seeks to criminalize the financing, organizing, and operation of troll farms, with proposed penalties stretching up to 6–12 years in prison for those who bankroll these operations.

And here’s the part that’s hard to ignore: the same senator pushing this bill has also stood beside and defended the very camp that normalized troll armies in our politics in the first place. The system is finally being named as a crime, but the original patrons of that system are still treated as allies, not architects.

Anywaym at least, for once, the law is looking not just at the kid paid per comment, but at the people who sign the checks.

Security outfits and infra providers are experimenting with tricks like Cloudflare’s “AI Labyrinth.” Instead of simply blocking misbehaving bots, the system quietly diverts them into a maze of AI‑generated pages where they keep clicking and scraping, thinking they’re still on the real site. Operators don’t get a clean error message. They just watch their bots burn CPU time and bandwidth arguing with junk content, their client’s budget literally dissolving into irrelevant words.

Somewhere, right now, a troll farm is paying for workers and electricity so their bots can fight with AI‑generated junk instead of with you.

It’s clever. It’s satisfying in a small way.

It doesn’t change the bigger reality.

For a Filipino online in 2026, this is the situation:

Any sudden wave of comments, DMs, or “public opinion” around a hot issue might be heavily manufactured.

You might be arguing with a real person, a farm operator, a synthetic persona, or a stolen face. You won’t be able to tell which.

People you know may have taken troll work out of desperation, and now they might be losing even that job to AI while the damage they helped do stays in the culture.

So what do we do with that.

Maybe the first step is to change how we read the crowd. When a post explodes with hostility, we can stop telling ourselves “lahat sila galit sa’yo” and start asking “magkano ang ginastos para dito.” When a relative casually mentions doing “online work” for a campaign or a foreign page, we can challenge them without pretending they were the only one who ever faced that temptation. When companies and politicians brag about “engagement,” we can remember how cheap engagement has become.

And for those of us who write, research, or just refuse to log off, maybe the hardest question is this:

If the machine learned from our worst choices—from the trolls we hired, the memes we shared, the lies we let slide—what do we owe each other now, both to the people who betrayed us and to the ones being used without even knowing it.

SOURCES:

https://docs.google.com/document/d/1I17uUxNN3JkJOHe1wkWQLqUTQ35UFeekRYAlUrcW9aM/edit?usp=sharing

Contact us

subscribe to morning coffee thoughts today!

inquiry@morningcoffeethoughts.org

© 2024. All rights reserved.

If Morning Coffee Thoughts adds value to your day, you can support it with a monthly subscription.

You can also send your donation via Gcash: 0969 314 4839.